What RAG means

RAG (Retrieval-Augmented Generation) is the process by which a generative AI searches external sources for information before producing its response. Instead of relying solely on its training data, the model retrieves content in real time, analyzes it, and uses it to build a response that's more accurate, more current, and more reliable.

This mechanism is why ChatGPT, Perplexity, and Google AI Overviews can cite sources, display links, and provide up-to-date data.

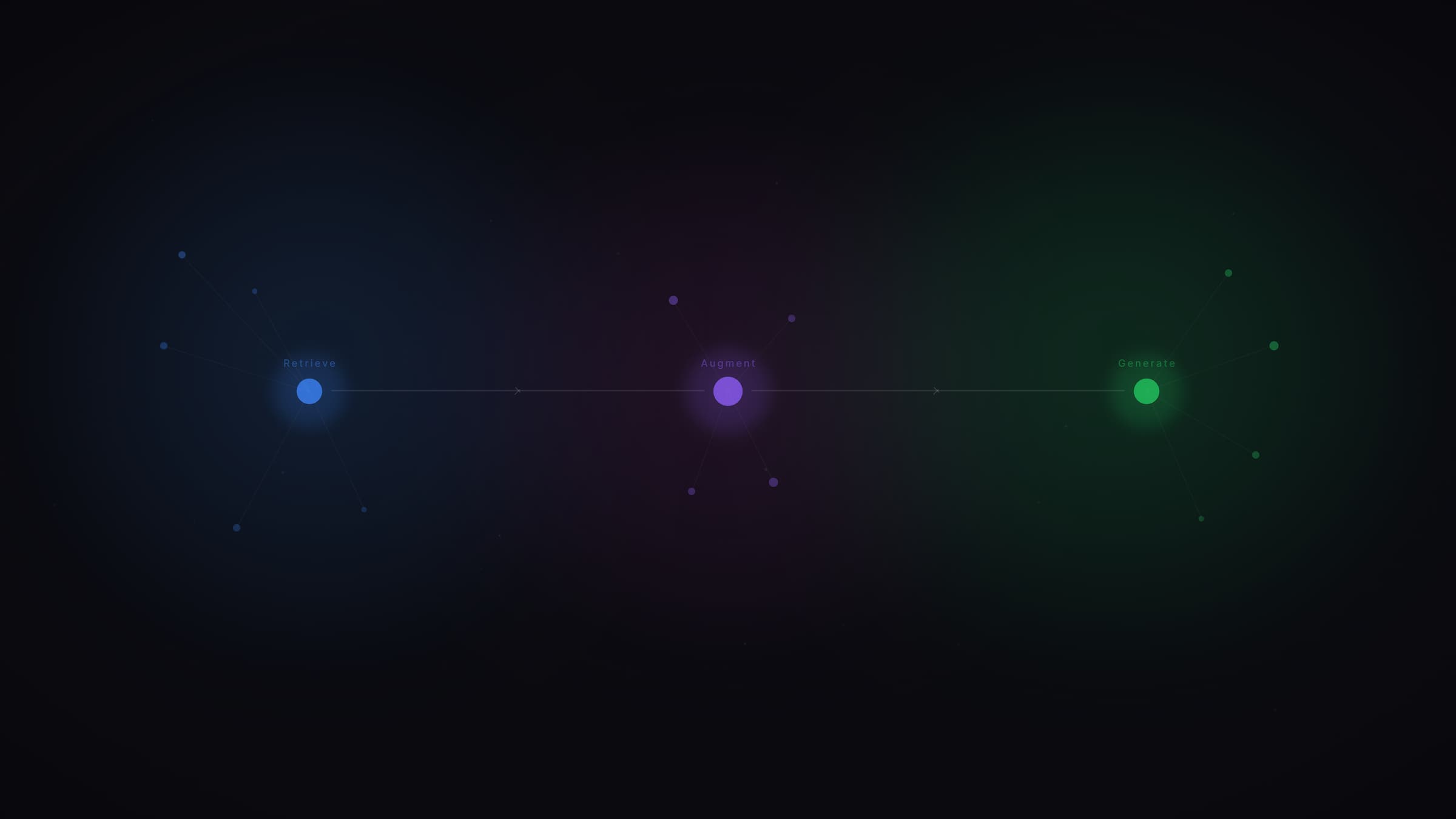

How the RAG pipeline works

RAG follows a multi-step pipeline:

1. Query reception. The user asks a question. The model evaluates whether it needs external information or if its knowledge base is sufficient.

2. Decomposition and search. The question is reformulated into sub-queries. A search system (web index, vector database) retrieves the most relevant documents.

3. Passage selection. From retrieved documents, the model identifies the specific passages that best answer the question.

4. Response generation. The LLM synthesizes selected passages into a coherent response, with citations to the sources used.

Why RAG is evolving in 2026

Classical RAG (indexing + retrieval + generation) remains the standard for systems requiring fresh data. But the landscape is shifting.

With extended context windows (Llama 4 reaches 10 million tokens), some document tasks that previously required RAG can now be handled through direct ingestion. RAG isn't disappearing, but its role is refocusing on cases where data freshness and verifiability are critical.

Modern RAG pipelines also integrate verification mechanisms: the model cross-references information across multiple sources before generating, reducing hallucinations.

RAG from a GEO strategy perspective

For GEO, RAG is the entry point for your content into AI responses. If your page isn't retrieved by the RAG pipeline, it will never be cited.

What we observe at Vydera: content that passes the RAG filter shares common characteristics:

- Direct answer in the opening paragraphs

- Clear structure with question-format subheadings

- Precise factual data that's verifiable

- Domain authority recognized through external signals

Long content with vague introductions, marketing jargon, or no sources gets systematically ignored by RAG pipelines.

Sources and references

- Aggarwal et al., GEO: Generative Engine Optimization, ACM SIGKDD 2024

- Google Search Central, AI Features and Your Website

- Sebastian Raschka, The State of LLMs 2025

Go further

RAG determines whether your content enters AI responses or stays invisible. At Vydera, we structure our clients' content to maximize retrieval rates by RAG pipelines. See our case studies or explore the Vydera Lab.

Do all LLMs use RAG?

No. Some LLMs answer solely from their training knowledge base (parametric mode). Others activate a RAG pipeline for real-time search. ChatGPT Search, Perplexity, and Google AI Overviews systematically use RAG. Claude activates it selectively.

How do you optimize content for RAG?

The keys: place the answer in the first 200 words, structure with question-format subheadings, include verifiable factual data, and use structured data (JSON-LD). Content must be extractable without reformulation by the model.

Will RAG disappear with larger context windows?

Not entirely. Extended context windows reduce the need for RAG on some document tasks. But for queries requiring fresh and verifiable data, RAG remains essential. Its role is evolving, but it's not going away.

What's the difference between RAG and fine-tuning?

Fine-tuning modifies the model's parameters by training it on specific data. RAG leaves the model intact and provides information on the fly via search. RAG is more flexible, less expensive, and keeps data up to date without retraining.