Key takeaways

- RAG (Retrieval-Augmented Generation) is the mechanism that allows AI systems to search for information in real time before generating a response

- Without RAG, LLMs are limited to their training data. With RAG, they read your pages live to build their answers

- Your content is broken into chunks (passages), converted into vectors, then compared to the user's query using semantic similarity

- What determines whether your content gets cited is the clarity of your passages, not the length of your pages

- Understanding the RAG pipeline is the foundation for optimizing your visibility in AI systems — it's the technical backbone of GEO

RAG (Retrieval-Augmented Generation) is the process by which a generative AI searches external sources for information before producing its response. Rather than relying solely on what it learned during training, the AI fetches content in real time, analyzes it, and uses it to build a more accurate, current, and reliable answer.

This mechanism explains why ChatGPT, Perplexity, and Google AI Overviews can cite sources, display links, and provide up-to-date information. It also determines which websites get cited in AI responses — and which ones get ignored.

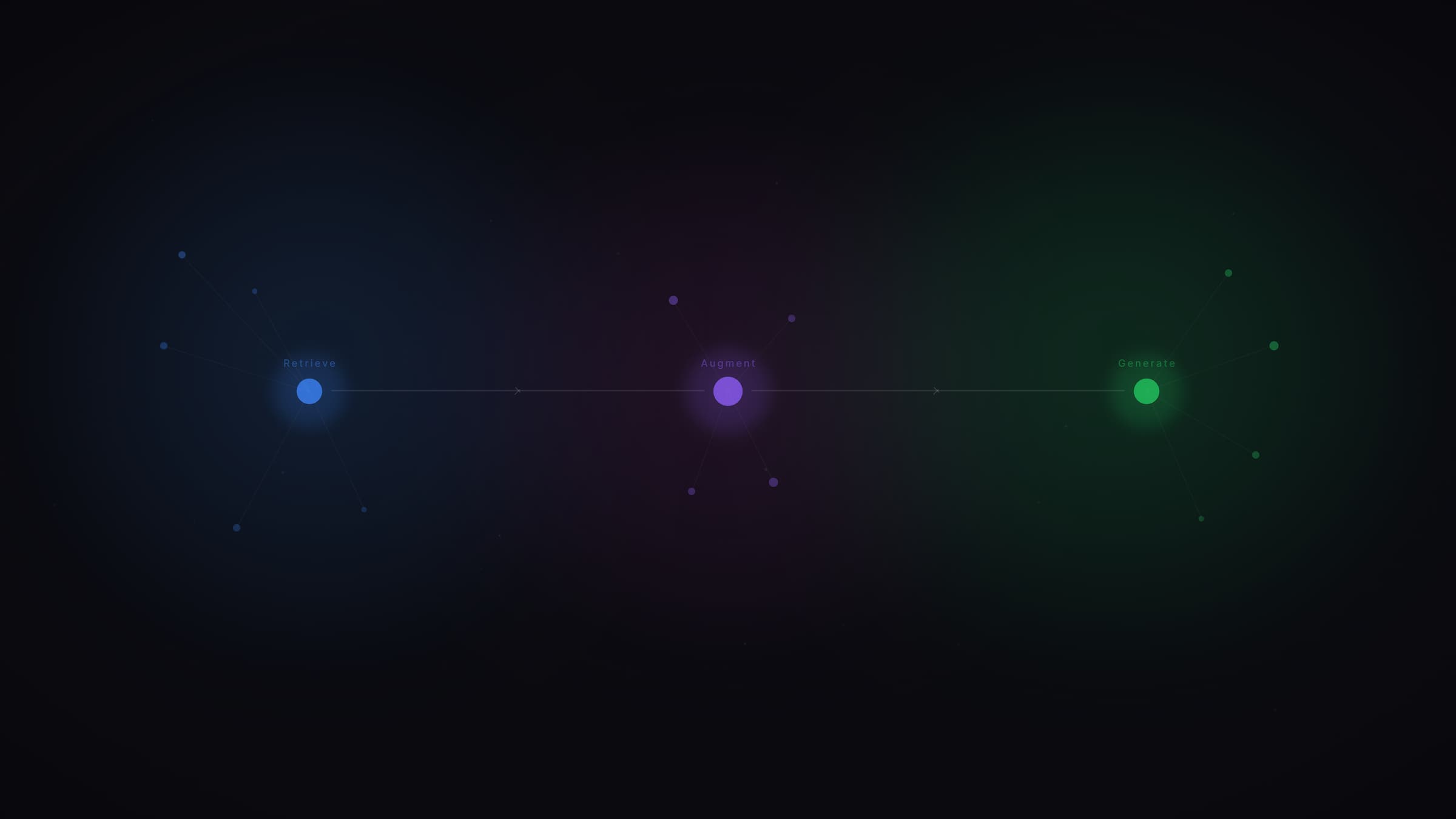

How RAG works: the 5-step pipeline

To understand why some content gets cited by AI systems and other content doesn't, you need to look at what actually happens when a user asks a question.

1. Query reception

The user asks ChatGPT, Perplexity, or Gemini a question. The model analyzes the intent behind the query and determines whether it needs external information to respond. If so, the RAG process kicks in.

2. Content retrieval

The AI searches the web or its indexes to find relevant pages. This step works like a traditional search engine: the system identifies documents most likely to contain the answer. This is also where query fan-out comes in, breaking the question into multiple sub-queries to cover every angle of the topic.

3. Chunking

Retrieved pages aren't processed in their entirety. They're split into passages (called "chunks") of 200 to 800 tokens depending on the system. This splitting is typically done by paragraph, H2 section, or sliding window. This is a fundamental point: the AI doesn't read your page from start to finish. It isolates blocks of information.

4. Vectorization and ranking

Each chunk is transformed into a mathematical representation (a vector, or "embedding") that captures its meaning. The system then compares these vectors to the user's query using semantic similarity (cosine similarity). Chunks whose meaning is closest to the question are selected. In general, only the 5 to 20 most relevant passages are kept for the next step.

5. Response generation

The LLM receives the selected chunks as context and uses them to build its response. It rephrases, synthesizes, and may add citations to sources. If information is incomplete or contradictory, the system can launch additional searches.

In short: RAG is what transforms a language model into a conversational search engine capable of citing its sources.

What RAG changes for SEO and GEO

RAG doesn't replace SEO. It adds a new selection criterion for your content: the ability to be extracted, understood, and cited by an AI.

As Google's John Mueller has explained, the "retrieval" part of RAG is fundamentally what SEOs have always worked on: making content crawlable, indexable, and accessible. What changes is what happens next. Once found, your content is chunked, vectorized, semantically compared, and used as raw material to build a response. That's a level of precision traditional SEO never required.

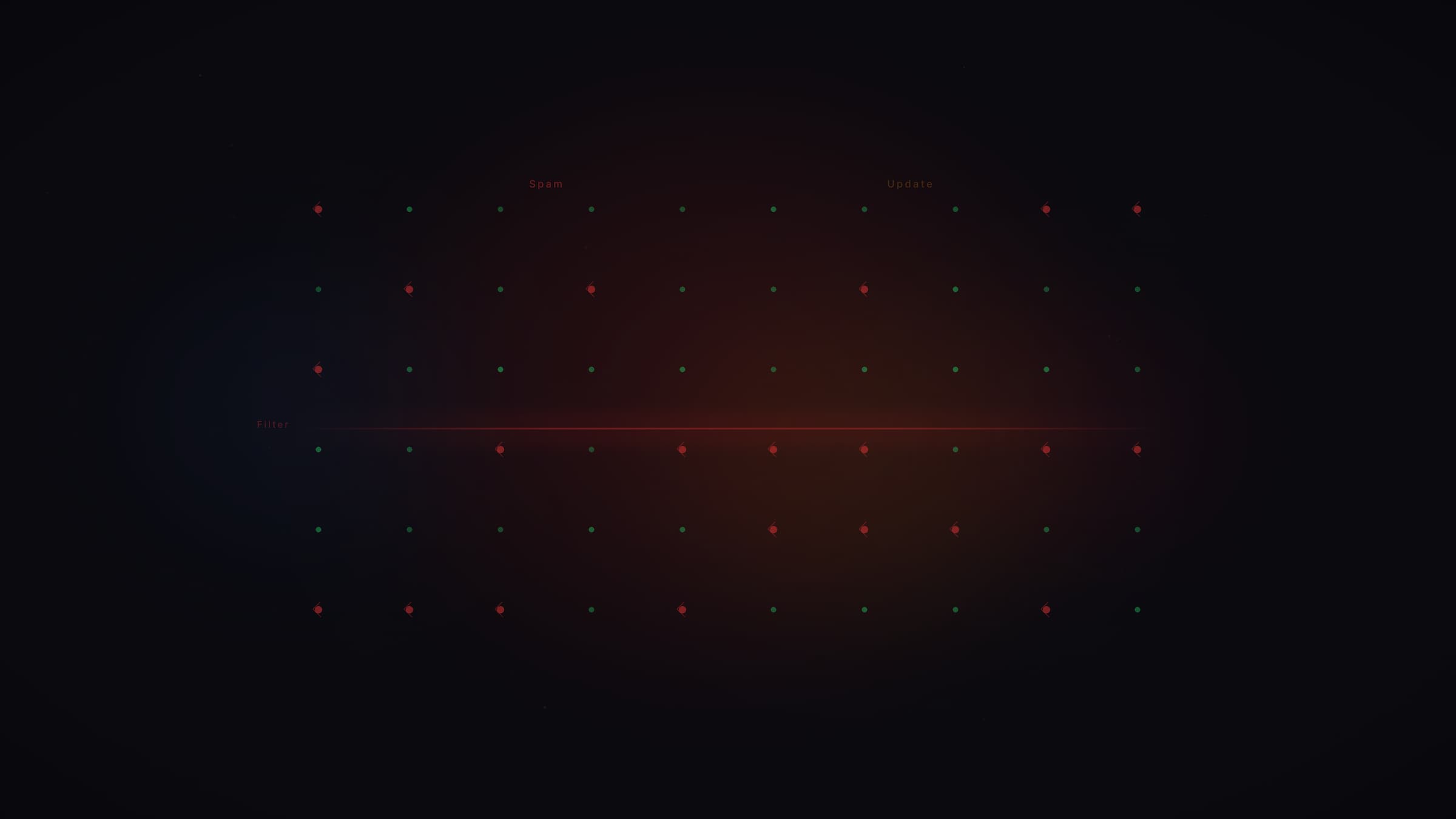

Relevance is determined at the passage level, not the page level

In traditional SEO, you optimize a page for a keyword. In GEO, what matters is the quality of each individual passage. A 3,000-word article can be completely invisible to an AI if none of its paragraphs directly answer a sub-query. Conversely, a well-structured, clear, factual four-sentence paragraph can be extracted and cited on its own.

The RAG system evaluates each chunk independently. The information density of each passage matters more than the overall length of the page. A sentence that delivers a verifiable fact is worth more than a paragraph of vague contextualization.

Semantic similarity replaces keyword matching

RAG systems don't look for exact keywords. They look for matches in meaning. Content about "beginner investment options" can be retrieved for a query like "where to put my money when I know nothing about investing," even if the exact words differ. What matters is the semantic alignment between your content and the query.

In practice, this means that covering a topic in natural language — with variants, synonyms, and paraphrases — is more valuable than repeating the same target phrase.

Content freshness is a strong signal

RAG systems favor recent content for topics that evolve. According to Perplexity data analyzed by Security Boulevard (February 2026), 76.4% of heavily cited pages had been updated within the past 30 days. Clearly displaying publication and update dates, and regularly refreshing your key content, is a direct lever for AI visibility.

Mistakes that make content invisible to RAG

Several common web writing practices mechanically block the retrieval process used by RAG systems.

Long generic introductions

The first chunk of a document is the most critical for retrieval. If your first 300 words are vague context ("in a world that's constantly changing..."), the vector for that chunk will be too far from any specific query. The AI won't retrieve it.

Diluted content

An article with 2 verifiable facts spread across 2,000 words produces chunks with low information density. The embeddings for those passages end up in "empty" zones of the vector space, far from user queries. Every sentence should contribute something new.

Purely promotional content

Passages that sell instead of inform aren't selected by RAG systems. AI looks for passages that answer questions, not passages that describe the qualities of a product or service. If you have concrete proof points (data, case studies, certifications), include them — but the passage must remain informative first and foremost.

No clear structure

Text without explicit subheadings, short paragraphs, or clear separation between ideas produces incoherent chunks when split. A RAG system can't isolate a precise answer from an undifferentiated block of text.

Pure JavaScript content

Some AI bots don't render JavaScript. If your main content is loaded dynamically, it may be completely invisible to retrieval systems. Essential information must be in static HTML.

For more on structuring best practices, see our guide: How to optimize your content to get cited by AI systems.

RAG and GEO: the core connection

RAG is the technical mechanism. GEO (Generative Engine Optimization) is the discipline of optimizing your content for that mechanism.

Every GEO recommendation traces back to how the RAG pipeline works:

Topical clusters exist to cover all the sub-queries generated by query fan-out during the retrieval phase.

Schema.org structured data (FAQPage, DefinedTerm, etc.) exists to facilitate parsing and chunking by AI systems. Properly marked-up content is easier to extract. We detail the priority schemas in our guide: Structured data: essential for SEO and GEO.

Short paragraphs with a direct answer in the first sentence exist to maximize the quality of each chunk's embedding. The clearer and more self-contained a passage is, the better its vector.

External reputation (press, forums, review platforms) exists because RAG systems apply authority filters to retrieved sources. A domain regularly mentioned in reliable sources gets a preference at selection time.

In other words: understanding RAG means understanding why every GEO action works.

A concrete example: how a fintech company gets cited (or doesn't)

Take a user asking ChatGPT: "What's the best robo-advisor platform in 2026?"

The RAG system will:

- Run multiple searches (fan-out): robo-advisor comparisons, user reviews, 2025–2026 performance, management fees, etc.

- Retrieve the most relevant pages for those sub-queries

- Chunk those pages and vectorize them

- Select the 10–15 semantically closest passages to the question

- Generate a response citing the sources

A site that will get cited: a comparison page with a clear table (name, fees, performance, rating), recent data, a Product or FinancialProduct schema, a structured FAQ, and a visible update date. Each section is self-contained and answers one facet of the question.

A site that won't get cited: a page that covers "robo-advisors" in general terms without comparative data, opens with a long paragraph about "the importance of managing your wealth well," and has no dates, no figures, no extractable structure.

The difference isn't writing quality. It's compatibility with the RAG pipeline.

Our take at Vydera

RAG is the technical foundation of everything we do in GEO. In our visibility audits, the first thing we check is whether a client's content is structured to be retrieved and extracted by RAG systems.

What we consistently see in practice: most sites aren't invisible because their content is bad. They're invisible because their passages aren't self-contained, their introductions are too long, and their factual data is buried in promotional text. These are structural problems, not quality problems.

The other recurring observation: companies that invest in structured data and external reputation get measurable results faster. These aren't the most visible levers — but they're the ones the RAG pipeline rewards most directly.

SEO remains the foundation. RAG is the layer that determines whether that foundation is usable by AI systems. The two work together. To understand the differences and complementarities: SEO vs GEO: what actually changes.

What is the difference between RAG and LLM?

An LLM (Large Language Model) is the language model itself. RAG is the process that allows it to access external information in real time. Without RAG, an LLM can only use what it learned during training. With RAG, it can fetch up-to-date content from the web, analyze it, and cite it in its response.

Is RAG used by all generative AI systems?

Yes. ChatGPT, Gemini, Perplexity, and Google AI Overviews all use some form of RAG for their web-connected responses. Implementation details vary (sources queried, number of chunks retained, re-ranking methods), but the core process is the same: retrieve, chunk, vectorize, select, generate.

How is RAG different from a traditional search engine?

A traditional search engine gives you a list of links. RAG goes further: it reads the pages, extracts relevant passages, and uses them to build a synthesized answer. Your content is no longer just a destination. It becomes a building block that the AI assembles with other sources to produce its response.

Will RAG replace SEO?

No. RAG depends on SEO to function. The retrieval phase of RAG uses search engine indexes (Google, Bing) to find relevant pages. If your content isn't indexed and ranking in SEO, it won't be picked up by RAG. The two disciplines are complementary.

How do I know if my content is "RAG-compatible"?

Test your key queries in ChatGPT, Perplexity, and Gemini. If your site is never cited, your content isn't passing the RAG pipeline's filters (insufficient information density, non-extractable structure, or lack of authority signals). Our guide on GEO tools details how to automate this tracking.

.jpg)

.jpg)