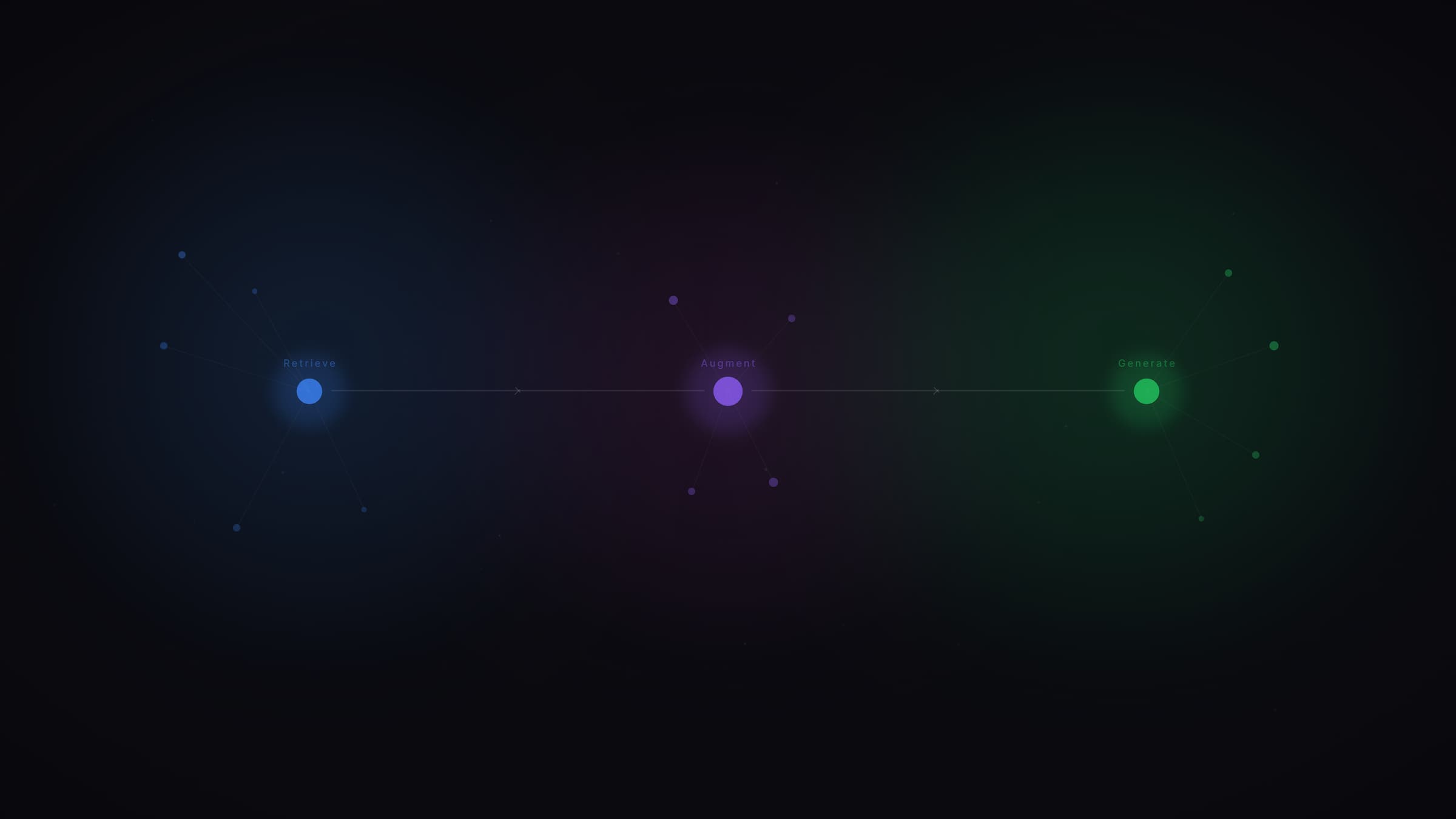

What is query fan-out?

Query fan-out is the process by which an AI search system decomposes a complex question into multiple parallel sub-queries to build a more complete and reliable answer. When you type "best project management tool for a remote team", the model doesn't search for that exact phrase. It silently generates variants: "project management software comparison", "remote team collaboration tools", "Asana vs Trello for distributed teams". These sub-queries determine which sources get cited in the final response. Your content is evaluated passage by passage, not page by page.

Query fan-out in 2026: one question triggers 8 to 15 sub-queries

Recent data analyzing over 72,000 AI-generated queries shows that a single question to ChatGPT or Gemini triggers an average of 8 to 10 parallel sub-queries. 95% of these sub-queries have zero monthly search volume in traditional SEO tools: they never appear in your reports, yet they govern your generative visibility. Google has formalized this mechanism in two major patents: US20240289407A1 ("Search with Stateful Chat", August 2024) and the PROMPTAGATOR patent WO2024064249A1 (March 2024), which describes a system generating 7 to 9 types of synthetic queries from a single user question. Query fan-out sits at the core of the RAG (Retrieval-Augmented Generation) architecture: it strengthens the retrieval phase by multiplying extraction angles to reduce hallucinations and ground responses in verifiable sources.

What we observe at Vydera about content ignored by AI

Monolithic content, a single long page on a topic, consistently underperforms in AI citations. Not because the quality is poor, but because it only covers one angle of the question. An article explaining "what an LMS is" will be selected for the definition sub-query but ignored for "LMS comparison for SMBs", "GDPR-compliant LMS", or "LMS deployment cost for 500 employees". This fundamentally changes the logic of content production. It's no longer one article = one query. It's one content cluster = one question with all its implicit dimensions. The brands winning AI visibility are those covering all the angles a model considers relevant when answering a given intent.

How to structure content to cover sub-queries

The most reliable method is to start from the target question and map its sub-queries before producing content. In practice:

- Use the "Steps" or "Sources" tab in Perplexity or Gemini on your target query to see the sub-queries the model actually generates.

- Cross-reference with Google's "People Also Ask" and your Search Console data.

- Structure each section to answer a specific sub-query, with an H2 that frames the question and a direct answer in the first 60 words.

- FAQ format with FAQPage structured data is especially effective: it natively matches the format LLMs exploit during fan-out.

Content that covers sub-queries comprehensively multiplies your chances of being selected during the fan-out process, regardless of your SEO position on the main query.

Sources and references

- Query fan-out: the core LLM mechanism reshaping SEO and GEO, Vydera Lab

- How AI Query Fan-Out Is Reshaping SEO in 2026, 85SIXTY (72,000+ queries analyzed)

- PROMPTAGATOR patent WO2024064249A1, Google (March 2024)

Go further

Query fan-out changes how you need to think about your content strategy. On Vydera Lab, we regularly publish analyses on optimizing for generative search engines. And if you want to understand how your current content covers the sub-queries of your target topics, let's talk on our contact page.

How does query fan-out affect a site's AI visibility?

Query fan-out determines which sources are extracted for each sub-query. If your content addresses the main angle but not the implicit secondary angles, you'll be cited partially or not at all. AI visibility depends on the topical coverage of your content, not only on your SEO position for the main query.

Do all LLMs use query fan-out in the same way?

No. There are notable differences across platforms. Google Gemini orchestrates sub-queries across multiple sources (web index, Knowledge Graph, Google Shopping). ChatGPT with search enabled generates sub-queries visible in JavaScript code during generation. Perplexity displays its sub-queries in the "Steps" tab. The logic is the same, but fan-out patterns vary across models and training data.

How should I structure content to cover query fan-out sub-queries?

The most effective approach is to start from the target question, identify its sub-queries via Perplexity or Gemini (Steps or Sources tab), then structure each H2 section to answer a specific sub-query, with the direct answer in the first 60 words. FAQ format with FAQPage schema natively matches the format LLMs exploit during fan-out. A multi-page corpus covering all angles of a topic outperforms a single comprehensive article.

Does query fan-out work differently depending on query type?

Yes. Simple, factual queries may not trigger fan-out: the model responds from its training data. Complex, comparative, or time-sensitive queries trigger the most sub-queries. Queries framed as "best X for Y" or "how to do X in context Y" systematically generate 8 to 15 parallel sub-queries. The more nuance a query implies, the broader the fan-out.