What an LLM is

An LLM (Large Language Model) is an artificial intelligence model trained on billions of parameters from massive amounts of text data. It can understand natural language, generate text, answer questions, and perform complex tasks like document synthesis, translation, and code generation.

LLMs run on a Transformer architecture, introduced by Google in 2017. This architecture lets them evaluate the relative importance of each word in a sentence, creating contextual understanding that previous models couldn't achieve.

Key LLMs in 2026:

- GPT-4o / GPT-5 (OpenAI): powers ChatGPT

- Gemini 2.5 (Google): integrated into Google Search and AI Overviews

- Claude (Anthropic): focused on safety and long reasoning

- Llama 4 (Meta): open-source, up to 10 million tokens of context

- Mistral (Mistral AI): competitive on performance/cost ratio

What's changing for LLMs in 2026

The LLM ecosystem has evolved dramatically. The global market is estimated at over $10 billion, and 67% of organizations have already adopted LLMs in their operations.

Three structural trends:

- Agentic capabilities: LLMs no longer just generate text. They plan and execute tasks autonomously, interacting with tools and APIs via protocols like MCP

- Extended context windows: Llama 4 Scout reaches 10 million tokens. This evolution reduces reliance on classical RAG for document queries

- Multimodality: models now process text, images, audio, and video simultaneously

The gap between open-source and proprietary models is narrowing. It was about a year in 2024, and dropped to six months in 2025. Open-weight models are increasingly viable for sovereign and private deployments.

Why LLMs are at the core of GEO

For any AI visibility strategy, understanding LLMs is not optional. They're the ones that decide which sources to cite in their responses. And each LLM has its own preferences.

What we see at Vydera: citation algorithms vary significantly across LLMs. ChatGPT relies heavily on Bing and its training data. Perplexity favors fresh, well-structured sources. Claude gives less weight to web search and more to its knowledge base. Google AI Overviews draws from its own index.

Optimizing for a single LLM isn't enough. An effective GEO strategy accounts for the specifics of each model.

How LLMs select their sources

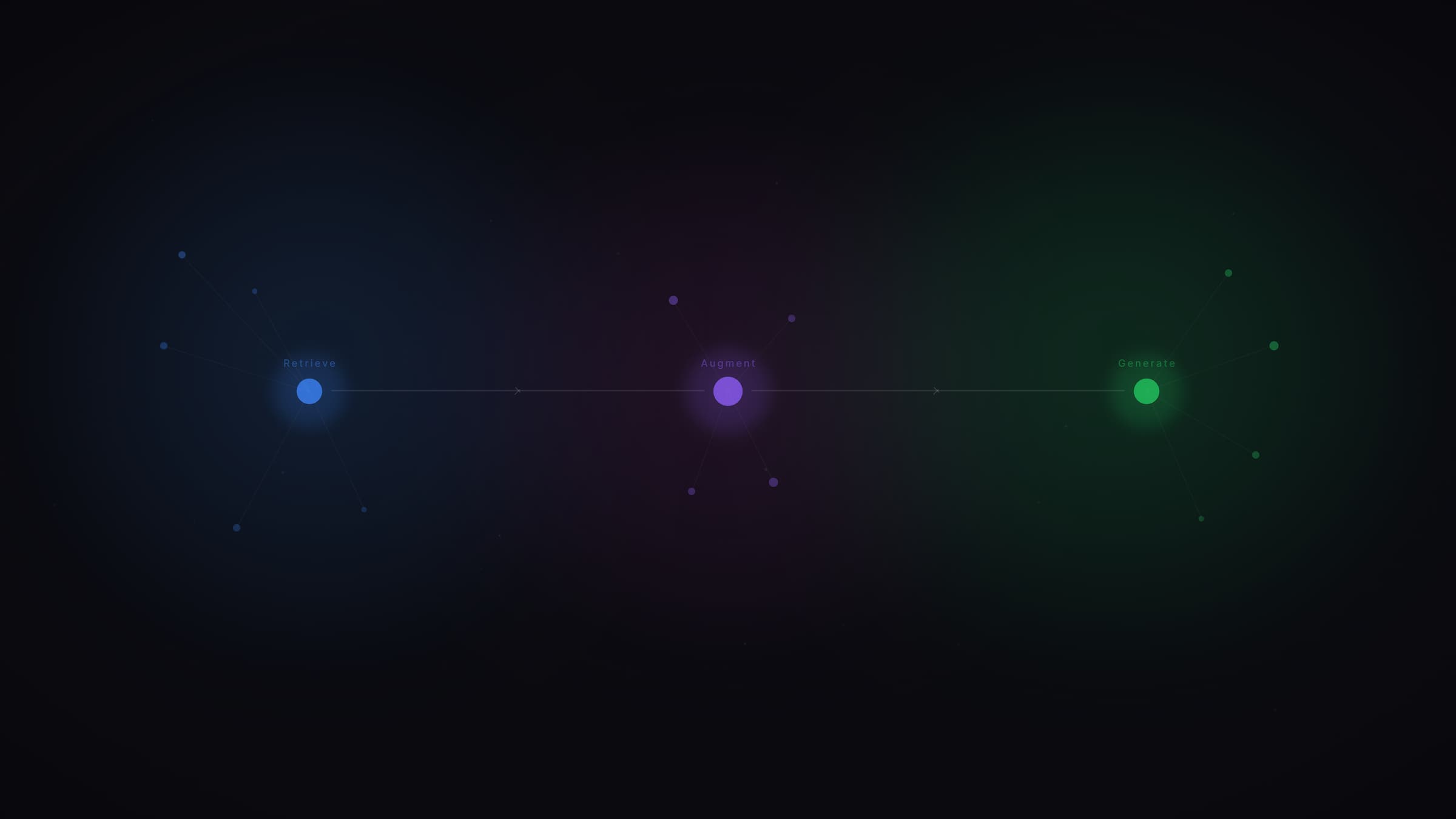

LLMs with web search (ChatGPT Search, Perplexity, AI Overviews) use a multi-step pipeline:

1. Query decomposition. The model breaks the question into parallel sub-queries (query fan-out).

2. Source retrieval. A RAG system retrieves the most relevant content from a web index or vector database.

3. Selection and synthesis. The LLM evaluates relevance, credibility, and freshness, then synthesizes its response citing the sources it deems most reliable.

Your content must survive each of these steps to get cited.

Sources and references

- Sebastian Raschka, The State of LLMs 2025: Progress and Predictions

- Aggarwal et al., GEO: Generative Engine Optimization, ACM SIGKDD 2024

- Hostinger, LLM Statistics 2026: Adoption, Trends, and Market Insights

Go further

Understanding LLMs means understanding the rules of AI visibility. At Vydera, we analyze citation behavior across models to adapt our clients' content strategy. See our case studies or explore the Vydera Lab.

What's the difference between an LLM and generative AI?

An LLM is a type of generative AI specialized in language. Generative AI is a broader term that also includes image generation (Midjourney, DALL-E), video, music, and code. LLMs are the models that power chatbots and AI answer engines.

Will LLMs replace Google?

Not replace, but fundamentally transform search behavior. Gartner predicts a 25% drop in traditional search volume by 2026. Google itself integrates LLMs via AI Overviews. Search isn't disappearing, it's redistributing across multiple platforms.

Do all LLMs cite the same sources?

No, and this is a critical point for GEO. Each LLM has its own selection mechanisms. ChatGPT relies on Bing, Perplexity on its own web search, Claude on its knowledge base, Gemini on Google's index. The overlap between AI citations and Google's top 10 is only 12% according to Ahrefs.

What's the difference between open-source and proprietary models?

A proprietary model (GPT, Gemini, Claude) keeps its training data and parameters confidential. An open-weight model (Llama, Mistral) publishes its weights, enabling local deployment and full data control. The performance gap between the two is narrowing rapidly and may close in 2026.

.jpg)